Santa Monica Awarded Silver & 83/100 Bike Score, But Just How Helpful Are Such City Rankings?

10:29 AM PDT on May 17, 2013

Santa Monica was just awarded a bump in it's Bicycle Friendly Community (BFC) classification by the League of American Bicycling, from bronze to silver. Coinciding with that news the walkability web application Walk Score released a new round of Bike Score rankings which now includes Santa Monica, which received an average score of 82.5 (rounded up to 83), high enough to come 5th in analyzed cities. Now there are probably few people more excited than myself about the real progress being made toward normalizing bicycling in Santa Monica, but I feel compelled to maintain some skepticism toward popular systems of classification for bicycle friendliness.

Relative to other cities ranked in the LAB system, the sliver may be an appropriate and deserved award, but about that bike score putting us in the same league as the platinum awarded cities of Davis,CA and Boulder, CO, I think it's a little early to pop the champagne bottles just yet. Some e-mail blasts, Facebook shares, and a locals news post have already circulating touting Santa Monica ranking #5 on Bike Score, so I think it's worth putting this in some needed context.

The first and most obvious issue here is cities are not all alike, and trying to compare them as such often ends up as in exercise in trying to put square blocks in round holes. Santa Monica has a higher score overall than Portland, OR, but Santa Monica is a small city boxed in by the city of Los Angeles in the patchwork of municipalities that form L.A. county. Our score covers a smaller foot print without any isolated sprawl or hilly regions. So while the core of Portland actually scores higher, the number that spits out for each city includes in some cases very disparate areas like steep hillside homes that are tanked with topography penalties in the Bike Score methodology. Even among cities more approximate to each other, comparing averages will always leave out important details.

What is bicycle friendly or bikability, is inherently subjective, and can mean very different things for different people. What's bikable enough for me is not good enough for many others. We should be asking bikeability for whom? Are we making it accessible for all who wish to, or just certain groups. Do parents feel comfortable allowing their kids to bike to school? At what age? Are facilities only accessible to certain neighborhoods and not other? Are political, social, racial, gender or economic inequities or other differences apparent in where a city makes investment in improving bicycling conditions or who feels they can ride? These questions and many others are critically important, but nuanced and defy simplistic efforts to rank a city as a whole or comparing one city to another.

The League of American Bicycling awards are arrived at with applications judged by a panel that consults with local reviewers for a sense of the on the ground reality of a place. I still recall feeling the bronze designation in 2009 was not deserved at the time, with years of little meaningful progress just prior, and on the heels of the crack downs by the SMPD against the Santa Monica critical mass that burned a lot of people in the local cycling community and the volunteers of Bikerowave, then located in Santa Monica. The acknowledgement this time around at least feels like it accompanies some real positive momentum.

Observing theses Bicycle Friendly Community designations a little more in my time as an advocate, in some ways they seem really to be a carrot approach to entice cities into deserving their status and reaching further. Now this sort of political nudging through awards is not necessarily a bad thing, especially when it really works. However when the bar is rather low as it is the U.S., those sitting at the top also run out of a next level to reach, and I've occasionally heard bike advocates in Portland lamenting a sense of complacency that comes with sitting at the top. The league is seeking to address this latter concern with a new diamond ranking that would be more competitive with top bicycling cities in the world, with requirements that would be be contextual and challenging to an individual city seeking the award. How that will really play out remains to be seen.

Now Bike Score is entering the picture with a formulaic data crunching approaching to ranking the bikability or bicycle friendliness of cities and their neighborhoods. The seeming appearance of impartiality and scientific methodology , that spitting out a number derived from other numbers can have, has a certain seductiveness to it. The quest to rank everything and put a definitive number on it is not inherently problematic, and lies at the core of so much of our scientific understanding. However it often leads urbanist commentaries of all kinds, not just bicycling, into unwarranted cheerleading or jeering territory, when we do not critically analyze what sort of data is going in and how it is going out and toward what aims.

There are purposeful or inadvertent intents and biases that underline how data is processed by software into a result, and just because something is data driven, doesn't make it right, and what "right" is, is not a fixed target. Measuring bikability or bike friendliness isn't like measuring the CO2 ppm in the atmosphere or the polarity of our planet, it's a bit more like measuring that famous question of what is pornography, and you know it when you see it (but someone else might see it a little differently). That Bike Score spits out a result that places Santa Monica so much higher than it sits within the LAB system, clearly highlights that the same place can be interpreted in very different ways with different assumptions made.

Walk Score is a company with investors, it is not an organization simply promoting walkablity and bikeability for the sake of doing so. They are selling a product, and their product is providing scores that have become very popular in real estate promotion as the fall out of the sprawl driven housing bust coincided with increasing demand for multi-modal places. This is not a judgement on whether this is necessarily a good thing or a bad thing (though there are critics like Walk Farce), but there are differing motivations between a membership advocacy organization like the LAB, and a company like Walk Score.

The Bike Score is derived from layering bike facility maps, Census survey data of bike commuters (which is a very simplistic measure), topography, and the proximity to services that is at the core of Walk Scores. I do appreciate that individual heat maps of each attribute can be displayed individually, helping explain what underlines it, but how each is weighted relative to the others and why for the final score is presently rather opaque. How those factors are weighted, and which factors are missing, matter a great deal for people with different outlooks on what bike friendly means for their own willingness or enjoyment to ride.

My skepticism toward all of this doesn't mean I don't think there shouldn't be tools like Bike Score, or that we shouldn't use them at all, but what concerns me is when such tools and their results are promoted out of context, or their capabilities overstated and hyped. Bike Score at this time is a crude instrument built on inadequate data. Results, especially those that compare very different cities with different contexts, should be taken with a grain of salt. Too often the urge is to celebrate any exceedingly positive result, no matter how weak the foundation, and I would like to see bike advocacy dialogue elevate with it's growing presence to a more critical and discerning view of where we are at, and where we aim to go.

Santa Monica is making progress, and stands out particularly within the region. On that much I think there would be little debate and the city and the advocates working to foster those changes deserve credit and acknowledgment. I just urge taking a cautious approach before getting too far ahead of ourselves with buying into city rankings that aren't fully baked yet. Really the whole project of trying to number cities into neatly sequential order is rarely a helpful enterprise on complex or subjective subjects, and I wish we get away from that mentality all together.

Stay in touch

Sign up for our free newsletter

More from Streetsblog Los Angeles

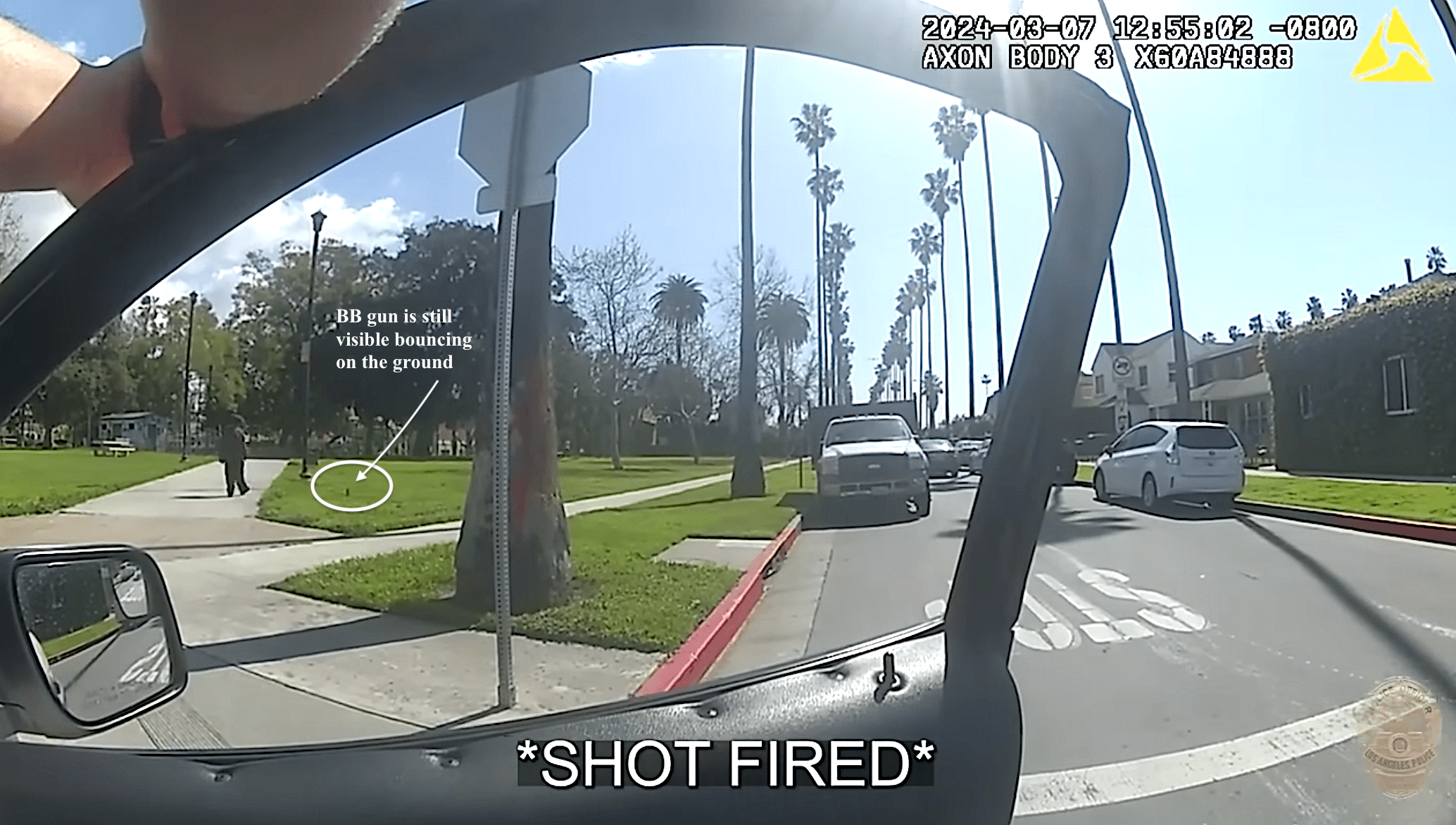

LAPD shoots, strikes unarmed unhoused man as he walks away from them at Chesterfield Square Park

LAPD's critical incident briefing shows - but does not mention - that two of the three shots fired at 35yo Jose Robles were fired at Robles' back.

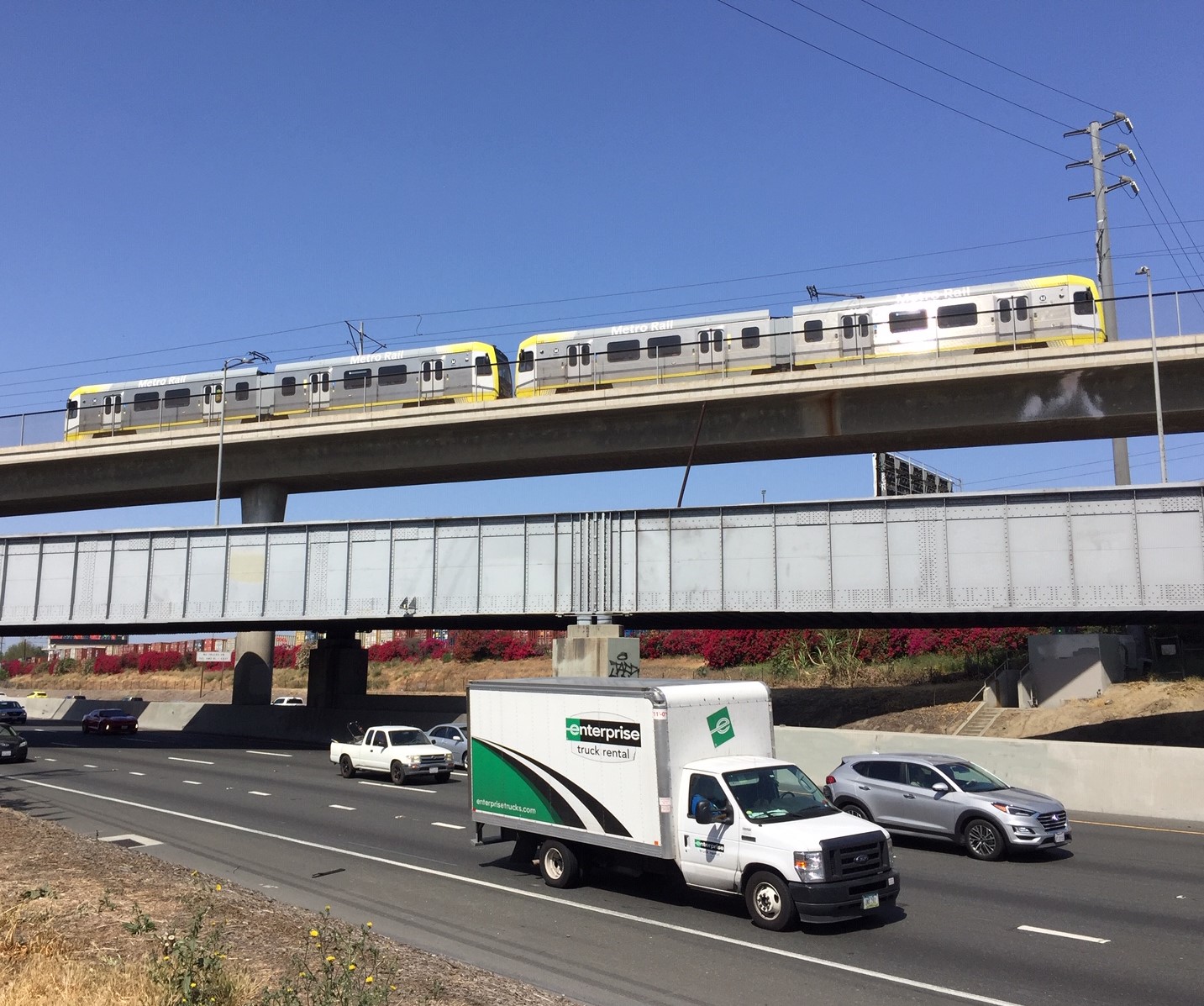

Metro Committee Approves 710 Freeway Plan with Reduced Widening and “No Known Displacements”

Metro's new 710 Freeway plan is definitely multimodal, definitely adds new freeway lanes, and probably won't demolish any homes or businesses

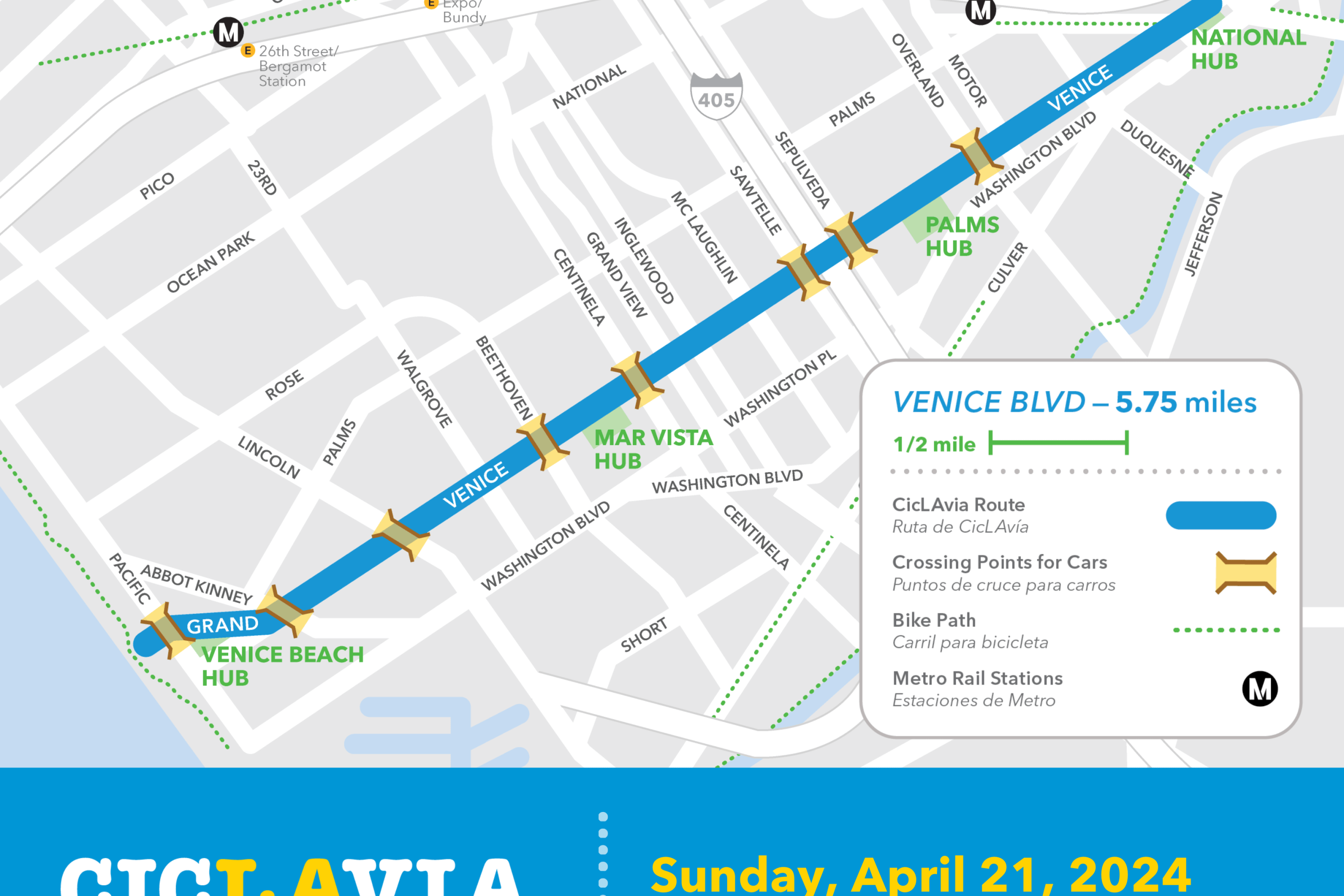

Automated Enforcement Coming Soon to a Bus Lane Near You

Metro is already installing on-bus cameras. Soon comes testing, outreach, then warning tickets. Wilshire/5th/6th and La Brea will be the first bus routes in the bus lane enforcement program.